About This Article

I recently attended a seminar called “AWS Builders Online Series” — an online event hosted by AWS aimed at beginners, covering a broad range of AWS fundamentals.

This article introduces two topics covered in that seminar: hallucinations in generative AI, and RAG (Retrieval-Augmented Generation) as a practical countermeasure.

The seminar’s slides and recordings are available at the link in the image below. If you’re interested in the full content, going directly to the source is the most useful option.

Generative AI Basics and Amazon Bedrock

If you’re new to generative AI and Amazon Bedrock, start with the previous article first — it covers generative AI fundamentals and my experience using Amazon Bedrock from the AWS console.

What Are Hallucinations?

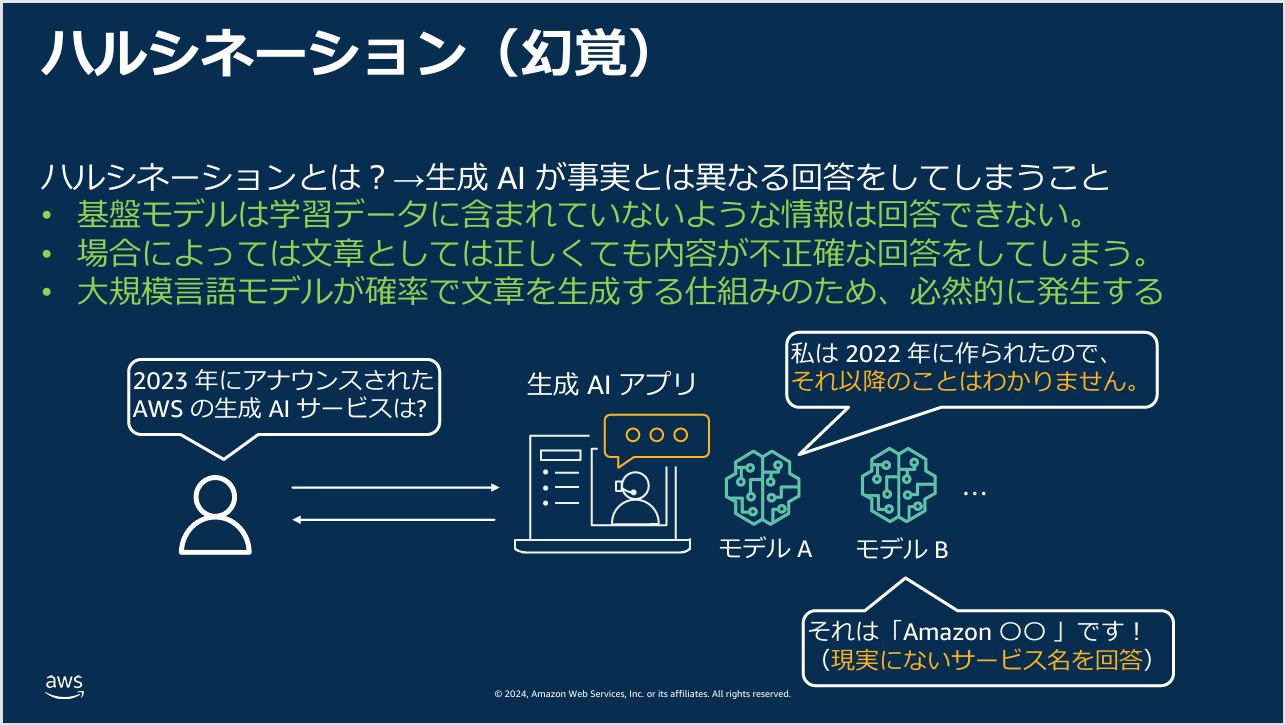

There are several known issues with generative AI in practice. One of the most significant is the phenomenon known as hallucination — where a generative AI produces a response that doesn’t match reality.

Generative AI generates responses based on its training data. That training data, however large, does not cover every fact in the world — which means there are questions the model simply cannot answer accurately.

On top of that, generative AI is tuned to produce creative, probabilistic responses (it generates text token by token based on probability distributions). Even when factual accuracy is what’s needed, the model’s creative tendencies can lead it to produce plausible-sounding but factually wrong answers.

These two factors combine to produce hallucinations.

What Is RAG (Retrieval-Augmented Generation)?

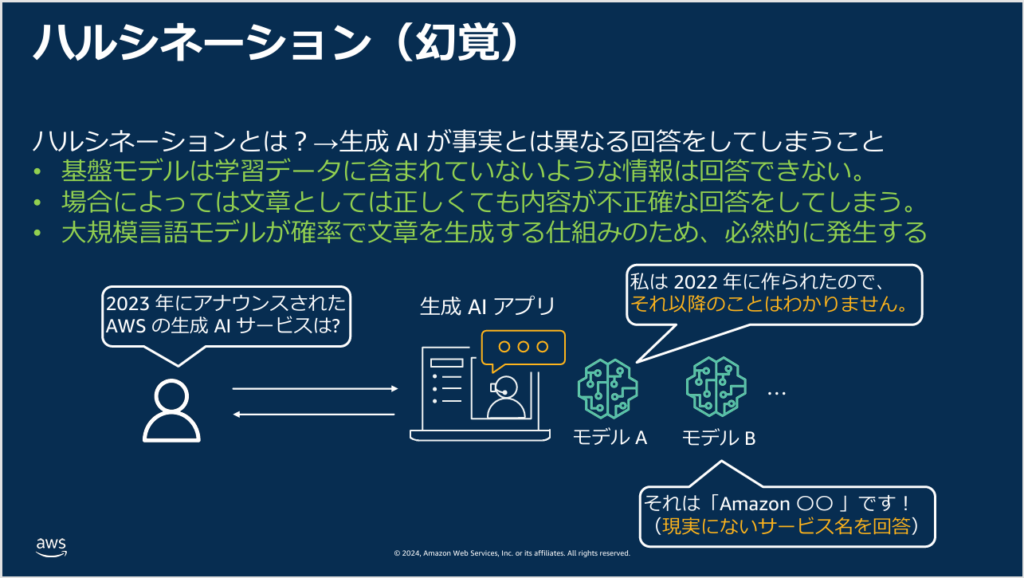

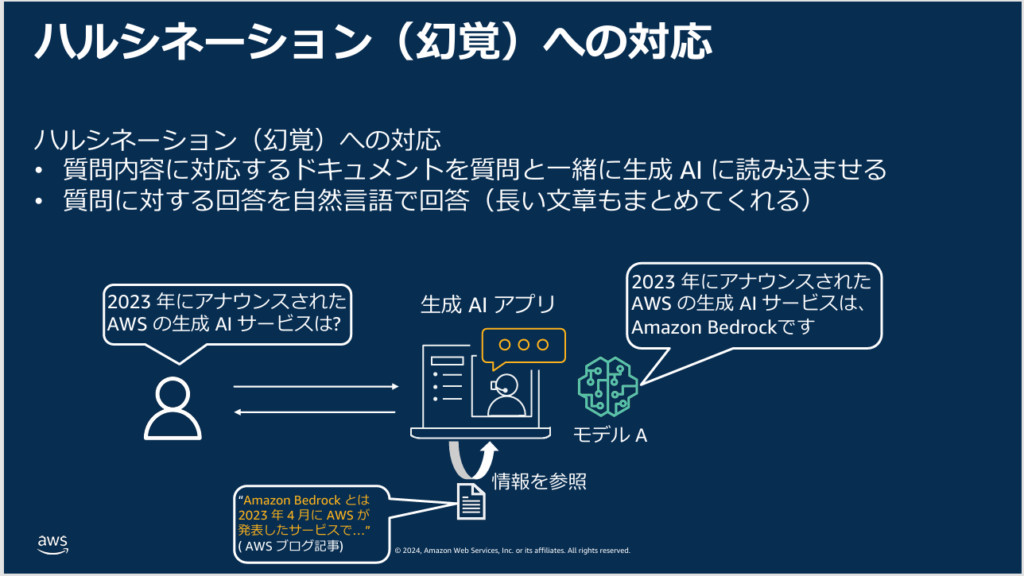

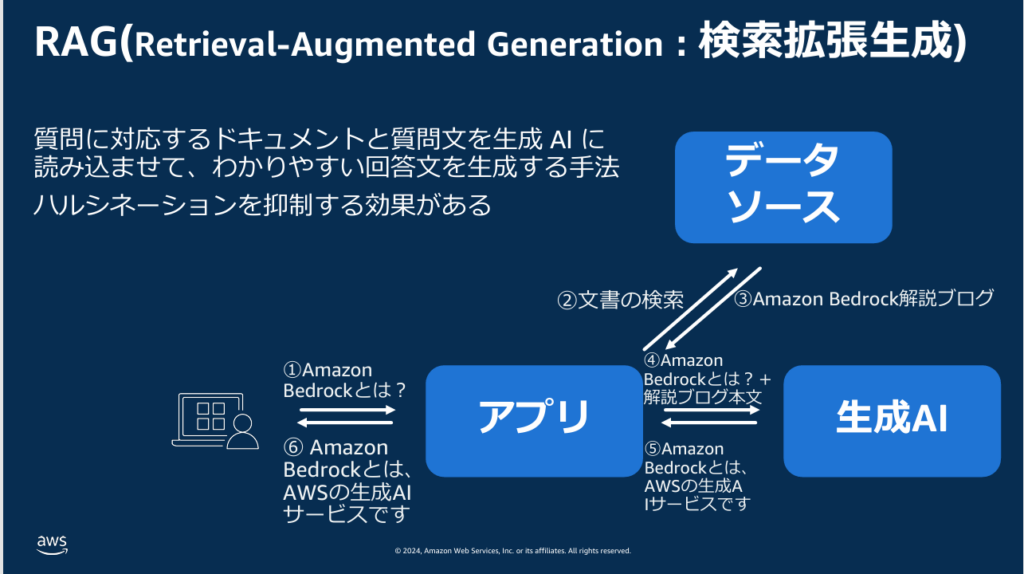

Since hallucinations occur when the model is asked about something outside its training data, the logical fix is simple: provide the relevant documents alongside the question, so the model can generate its answer based on that specific content.

This approach is called RAG (Retrieval-Augmented Generation).

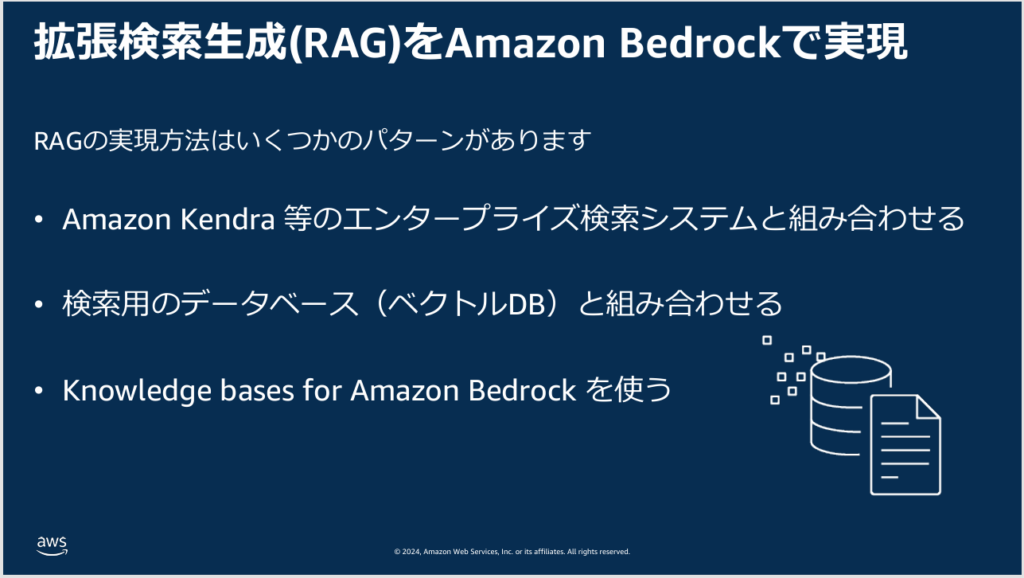

There are multiple ways to implement RAG, but what makes AWS particularly compelling here is that it offers RAG as a managed service.

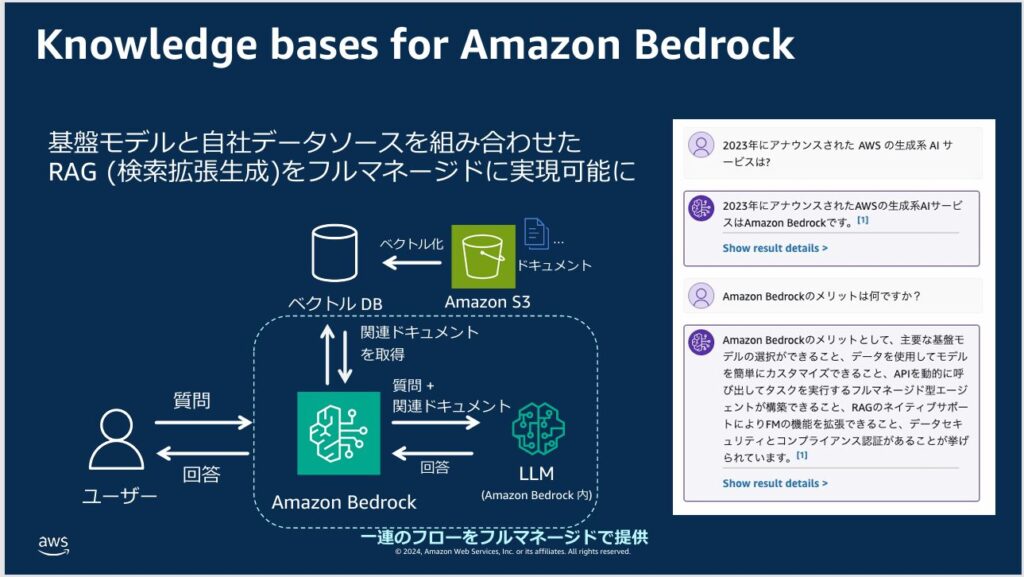

Specifically, Knowledge Bases for Amazon Bedrock lets you implement RAG as a fully managed service with minimal setup.

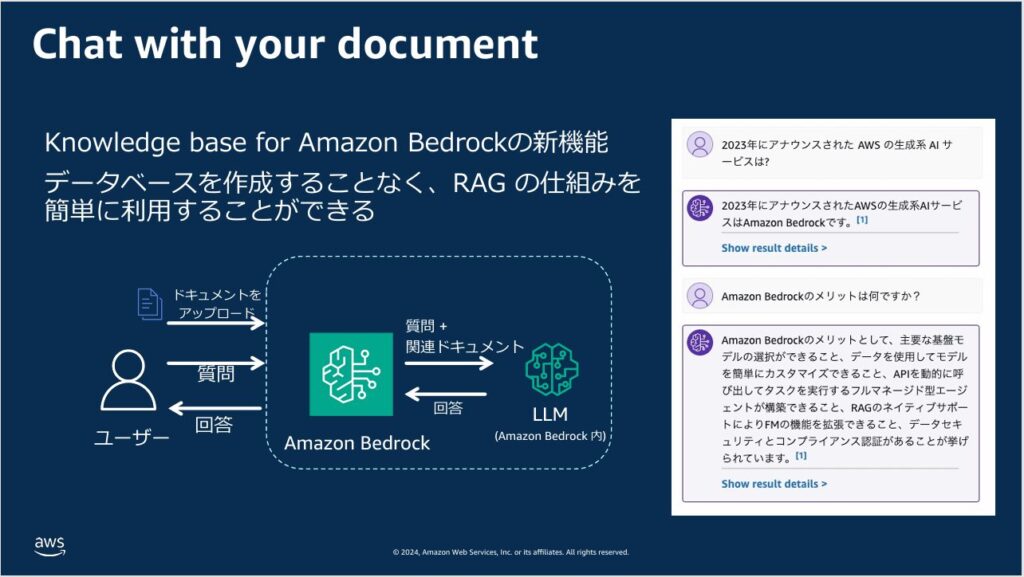

Even better, Knowledge Bases for Amazon Bedrock doesn’t require you to set up a database manually — you can simply upload your documents and use RAG immediately.

Hallucination is one of generative AI’s most serious limitations. AWS makes it straightforward to address that limitation using RAG through Amazon Bedrock.

Summary

This article covered hallucinations — one of generative AI’s core problems — and RAG (Retrieval-Augmented Generation) as the solution.

You’ll sometimes hear pundits on television confidently declare that generative AI isn’t suitable for business use. But the root problems they’re pointing to — hallucinations and similar issues — have solutions. This is one of the most important things to understand.

Building a foundation model from scratch requires highly specialized knowledge and resources. But building knowledge of RAG and how to implement it is a much more achievable goal.

Going forward, professionals who can build and implement RAG-based systems will be increasingly valued in real-world settings. If you’re interested, now is a great time to start learning.

For the previous article covering generative AI basics and Amazon Bedrock console usage, see below: