About This Article

This article is my attempt to organize and summarize the current state of artificial intelligence (AI) — a topic that has been generating enormous attention. Written as of January 2024.

The AI space evolves so rapidly that keeping up with everything is nearly impossible for anyone outside the field. This article focuses on the most widely discussed topics, with the goal of giving readers who aren’t deeply familiar with AI a rough sense of where things stand.

I’m still learning about AI myself — writing articles like this helps me deepen my own understanding, and I hope it serves as a useful reference for others who are just starting to explore the topic.

What Is AI, Anyway?

Before diving into the current state of AI, let me briefly explain what AI actually is. (Feel free to skip ahead if you already know.)

AI stands for “Artificial Intelligence.” The first use of the term is attributed to scientist John McCarthy, who introduced it in a 1955 proposal describing it as “the science and engineering of making intelligent machines, especially intelligent computer programs.”

However, that description never became a formal definition. Even today, scientists disagree on how to define AI.

The general public’s intuitive sense — that AI refers to “machines or programs capable of intelligent behavior (doing things like humans do)” — is roughly in line with what McCarthy described. But the concepts of “intelligence” and “intellect” themselves aren’t clearly defined, which is why AI also lacks a precise definition.

This brings us to a key distinction: “narrow AI (weak AI)” versus “broad AI (strong AI).”

Strong AI (Artificial General Intelligence)

In the strict sense, “strong AI” refers to an AI that possesses intelligence and consciousness like a human being.

Think of the robots from classic science fiction — companions who live alongside humans and assist them in all aspects of life. That’s strong AI.

As of the time this article was written, strong AI has not been achieved. Reaching it is considered the ultimate destination of AI research (at least by most people in the field).

In practice, what we actually use today is “weak AI” — AI specialized for a specific domain that can simulate human-like behavior within that domain, without genuinely possessing intelligence or consciousness.

The term “strong AI” originates from a 1950 paper by mathematician Alan Turing and a thought experiment by philosopher John Searle responding to it.

It’s also worth noting: while “strong AI” and “general AI” used to be used interchangeably, they’re increasingly being distinguished. “Artificial General Intelligence” (AGI) now refers to AI that can handle a wide range of tasks — even without possessing human-like consciousness — while “strong AI” is reserved for AI with genuine intelligence and mind.

Weak AI (Narrow AI)

“Weak AI,” as described above, refers to AI that lacks consciousness or genuine intelligence.

Every AI currently in use falls into this category — specialized systems that simulate human-like behavior within specific domains.

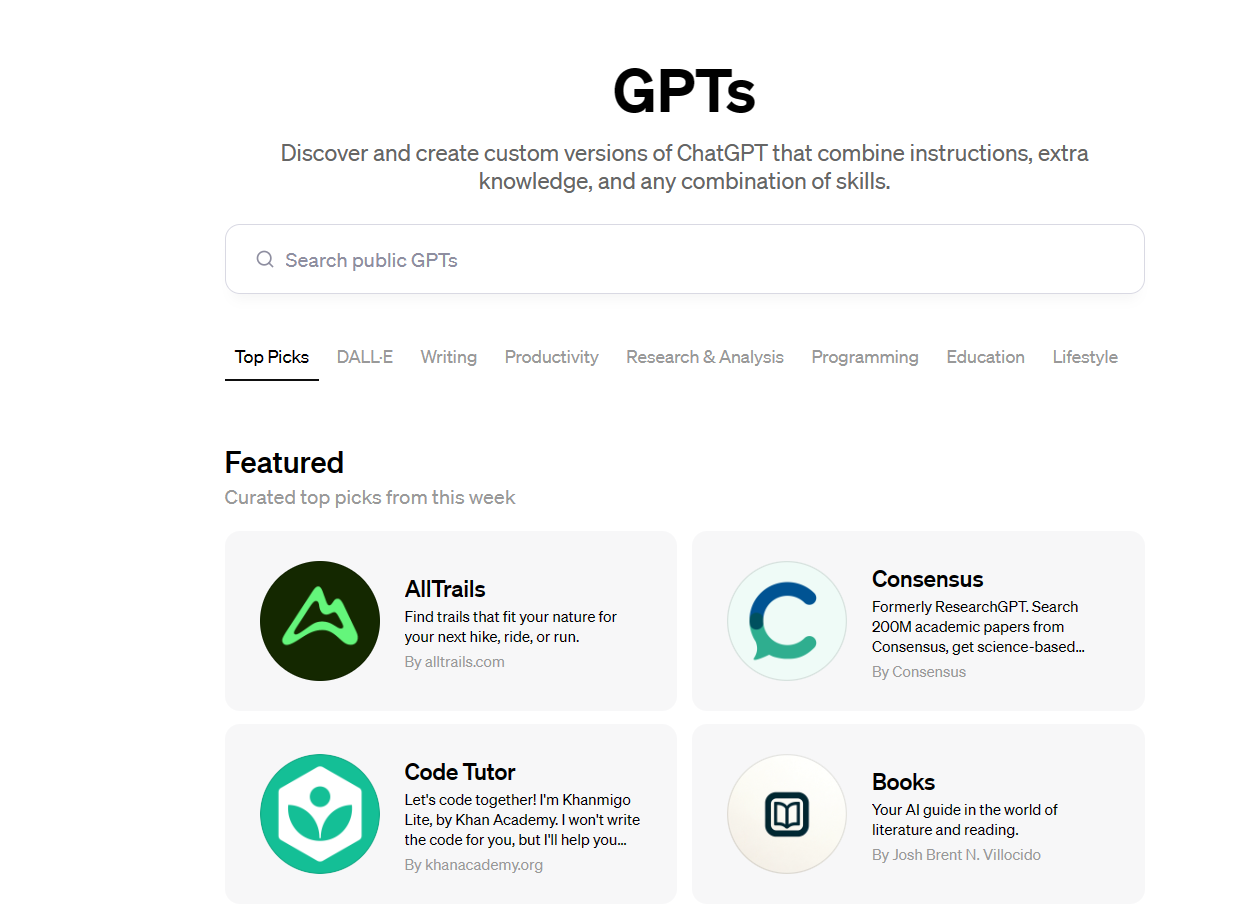

Recent “generative AI” tools are all examples of weak, narrow AI — including ChatGPT (which responds to questions with startlingly human-like answers), and image generation tools like Midjourney and Stable Diffusion.

What Is Generative AI?

Generative AI is, literally, a catch-all term for AI that generates content — text, files, images, video, and so on. There is no strict formal definition.

The most well-known generative AI tool is ChatGPT, released on November 30, 2022. It stunned the world by responding to inputs in ways that felt remarkably like a real human being.

Image generation tools like Midjourney and Stable Diffusion also emerged, letting anyone create illustrations easily — another moment of widespread astonishment.

2023 was the year generative AI’s reach expanded dramatically and reached mainstream awareness. From 2024 onward, adoption is expected to grow steadily, and using generative AI for work and creative pursuits is expected to become normal within a few years.

That said, there are legitimate concerns about generative AI — the most prominent being copyright infringement in the training data (text and images). Some countries have introduced regulations; lawsuits have been filed.

Additional concerns include AI confidently providing incorrect answers, and the spread of “deepfake” videos used in harmful disinformation and scams. Generative AI is not a universal solution to every problem.

That said, I believe — much like the early days of personal computers and the internet — that generative AI will become widespread despite the concerns, simply because of how useful it is.

Whether you can keep pace with the coming era of generative AI may well determine whether you can remain effective in the next era.

Note: the cover image for this article was generated using Stable Diffusion.

A Brief History of AI

Generative AI didn’t appear out of nowhere — AI research has been quietly advancing for decades, and generative AI is the result of that long progression finally reaching a broad audience.

Understanding AI’s history helps put everything in context. I’ll cover only the major milestones here; for more detail, Wikipedia’s “History of artificial intelligence” article is a good reference.

(Note: the original article included data tables here.)

Simply reading through the milestones won’t give you a complete picture, but the rough mental model is: today’s generative AI sits on top of “deep learning” and “big data,” built on the architecture called “Transformer,” and runs on large language models (LLMs). It represents the cumulative result of AI’s entire history — not something that arrived suddenly.

(Image generation AI like Stable Diffusion uses different core technologies — GANs and VAEs — rather than LLMs directly, but that level of detail gets technical fast, so I’ll leave it here.)

What Is a Large Language Model (LLM)?

Let me briefly explain what LLMs actually are, since they came up in the history section.

An LLM (Large Language Model) is a natural language processing (NLP) model trained using deep learning and big data — essentially, an AI that has learned from enormous volumes of text and can now process and generate natural-sounding language.

When you use a tool like ChatGPT, you interact with it through a product interface — but under the hood, what’s doing the actual work is an LLM like GPT-3.5 or GPT-4. The LLM processes your input and produces a response; the product (ChatGPT) is the layer you interact with.

If you think of it like a computer: the LLM is something like the operating system (Windows, macOS), and generative AI tools are the applications (like Excel) running on top of it. (Not a perfect analogy, but useful.)

Most well-known LLMs are built on the “Transformer” deep learning architecture. The GPT series is a good example — the name stands for “Generative Pre-trained Transformer,” and the “Transformer” at the end reflects this foundational architecture.

Many companies are now releasing their own LLMs, and competition in this space will continue to intensify. As noted, LLMs themselves are like the brain or OS layer — ordinary users don’t interact with them directly. What we’ll likely see going forward is more products and APIs built on top of various LLMs, with users choosing among them based on their needs.

It’s also possible that dominant tools like ChatGPT will integrate access to multiple LLMs and automatically select the most appropriate one for each task.

LLM accuracy is primarily influenced by three factors: compute (training resources), data volume, and parameter count — plus other variables like training data quality. More parameters generally correlates with higher accuracy, but it isn’t a guarantee.

The Future of AI: The Singularity, AGI, and ASI

Having covered recent AI developments and their history, let me close by touching on what comes next.

There are three key concepts worth knowing: the Singularity, AGI, and ASI.

The Singularity (Technological Singularity)

The term “Singularity” first appeared in a 1983 paper by Vernor Vinge, “The Coming Technological Singularity.” Since then, many prominent thinkers have debated when it will arrive.

The Singularity refers to the moment when AI acquires intelligence surpassing that of humans — after which AI begins developing further AI, causing technological progress to accelerate exponentially. It’s also called the “technological singularity” as a turning point after which change becomes unpredictable.

Predictions vary: Vinge’s original paper estimated around 2030; Hans Moravec predicted 2040; Ray Kurzweil predicted 2045. All of these fall within the next 10–20 years — not the distant future.

Whether the Singularity arrives when AGI is achieved, or when AGI acquires genuine strong AI-level intelligence, remains unclear. But AI will certainly continue to advance.

AGI (Artificial General Intelligence)

“AGI” has largely replaced “general-purpose AI” as the dominant term in recent discussions.

AGI stands for “Artificial General Intelligence” — AI capable of handling a wide variety of tasks in a general-purpose way. Originally used interchangeably with “strong AI,” it increasingly refers to AI that can handle broad domains without necessarily possessing human-level consciousness.

What’s consistent across definitions: AGI can perform everything humans can, at human level or above.

AGI could also engage in self-directed learning, acquiring new knowledge on the fly to handle situations it has never encountered before.

The societal implications of AGI would be enormous. AGI could diagnose patients more accurately than doctors; it could potentially manage governments and local administrations more efficiently than most politicians.

Of course, even with AGI, realizing its full potential would require compatible physical hardware, software infrastructure, and legal frameworks for determining what AGI may and may not do. But once AGI exists, it’s hard to imagine society not reorganizing around it.

Many researchers expect AGI to be realized in the future. Masayoshi Son has predicted it will arrive within 10 years. My personal sense, given the pace of recent AI progress, is that it may come even faster.

https://www.youtube.com/watch?v=Gh0xzbgCIgg

ASI (Artificial Superintelligence)

ASI — Artificial Superintelligence — is the concept that comes after AGI: an intelligence so far beyond human capacity that it could effortlessly solve problems and generate ideas that are currently beyond human imagination.

Some predict that an ASI-level entity would eventually be able to control weather, human behavior, and society itself with god-like capability.

If ASI were achieved, society would look so different from today as to be unrecognizable. What that world would mean for humanity — I genuinely can’t predict.

Summary

That’s a high-level overview of where AI stands today.

This article was written for people who aren’t deeply familiar with AI, to give them a grounding in the topics that keep coming up in AI discussions. I intentionally avoided getting too technical.

The AI landscape changes so quickly that even what I’ve written here may already be outdated by the time you read it. When that happens, I’ll aim to write updated articles.

Going forward, I plan to write more focused, accessible articles on specific tools and topics in AI. If that sounds interesting, I hope you’ll come back.

AI is a field that will only grow more important in the coming years. Let’s keep learning together.

AI & Investing: How to Use ChatGPT for Smarter Stock Research